Built for Live Operations

Connect IP cameras and USB cameras, monitor the image in real time, add useful context on top of the video, and send the feed where it needs to go.

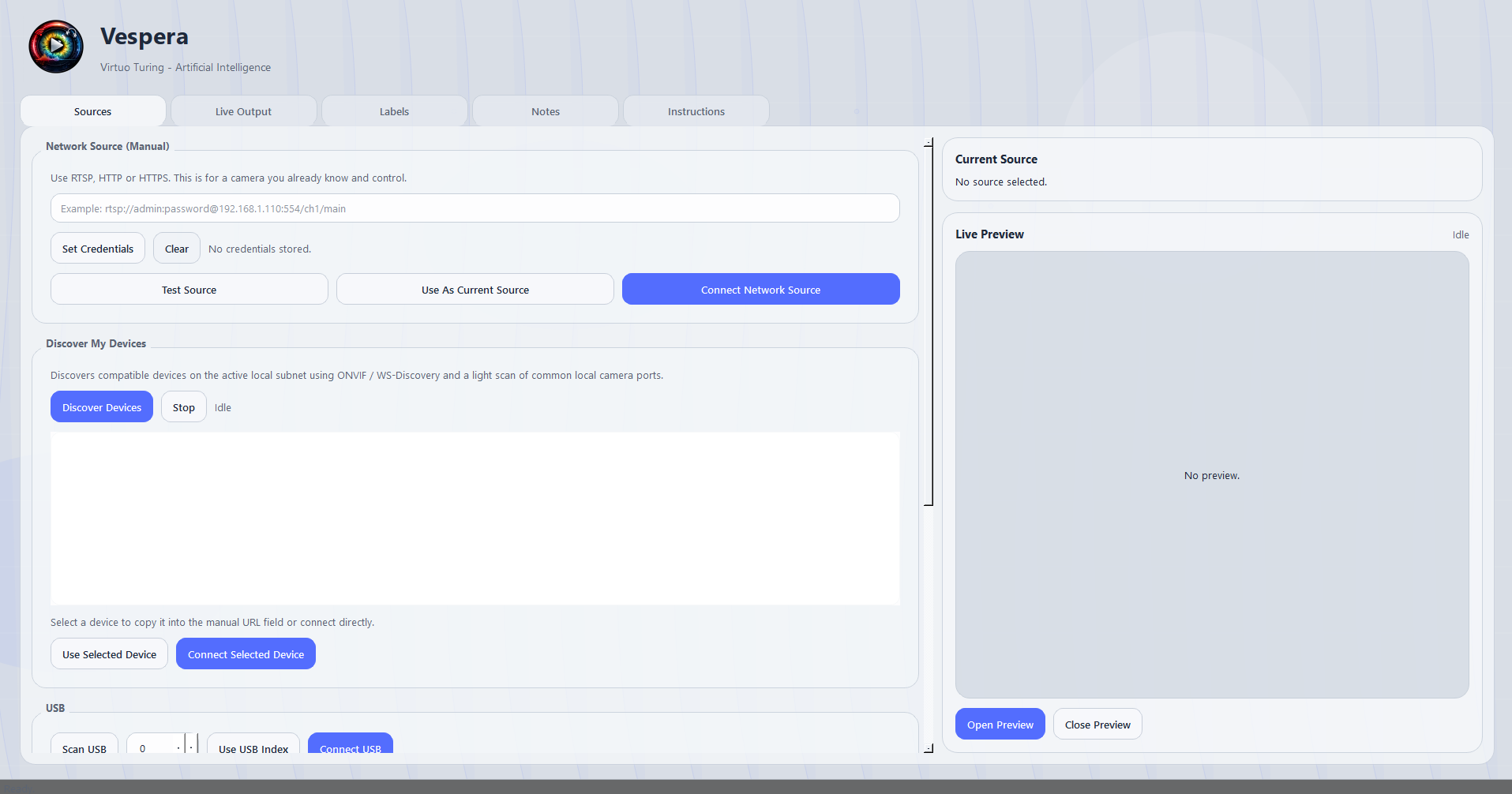

Vespera is a lightweight graphical app for connecting cameras, viewing live video, adding overlays, and streaming to major platforms. It supports RTSP, HTTP, HTTPS, and USB cameras, discovers devices through ONVIF / WS-Discovery and common camera port scans, shows a live preview, overlays date/time, location, and notes, offers optional AI detection for people, dogs, cats, and up to 80 object classes, and streams to YouTube, Twitch, Facebook Live, Restream, Cloudflare Stream, Vimeo, or any custom RTMP/RTMPS destination. It runs well even on laptops and does not require a GPU because it can run on CPU.

Built for live camera workflows, fast overlays, and direct streaming delivery.

Connect IP cameras and USB cameras, monitor the image in real time, add useful context on top of the video, and send the feed where it needs to go.

Find devices on the local network, enable object detection when needed, and, where legally permitted, use facial recognition, body-based recognition, clothing-based recognition, and person re-identification (ReID). Publish streams directly to major live platforms or any custom RTMP/RTMPS target.

Read this carefully before enabling any person-related analytics, especially facial recognition, body-based recognition, clothing-based recognition, or person re-identification.

This text is provided for informational and compliance-warning purposes only. It is not legal advice, does not create an attorney-client relationship, and must not be relied upon as a substitute for legal advice from qualified counsel. Any user, reseller, integrator, deployer, operator, customer, or public authority considering any sensitive, high-risk, unusual, or legally uncertain deployment of Véspera must consult qualified legal counsel and, where applicable, the competent data protection, privacy, surveillance, labor, consumer, law enforcement, or telecommunications authorities in each jurisdiction where the Software, any connected camera, or any related data is used, deployed, stored, transmitted, or accessed.

Véspera is a software platform that can connect a camera feed to a streaming environment and apply automated analysis, including object detection across multiple classes and, in some configurations, person-related analysis such as person presence detection, facial recognition, body-based recognition, silhouette-based recognition, gait-like recognition, or clothing-based recognition.

This notice exists to state, clearly and without marketing varnish, that these functions create legal, technical, operational, and ethical risks. Those risks increase materially when the Software is used to observe, single out, track, identify, classify, or make decisions about human beings.

Use of Véspera may be lawful, restricted, heavily regulated, or prohibited depending on the country, state, city, industry, exact deployment design, camera angle, data retention model, purpose, user role, and whether any person is identifiable directly or indirectly. The fact that a technical function exists does not mean it is lawful to activate it. The fact that another company uses similar technology does not mean the deployment is lawful either.

Local law, sector-specific rules, public-space rules, workplace rules, consumer rules, criminal procedure rules, and biometric privacy rules may all apply at the same time.

All person-related outputs generated by Véspera are probabilistic, inferential, and error-prone to some degree. They are not certainty machines. They may generate false positives, false negatives, missed detections, duplicate detections, identity confusion, and biased or uneven performance across contexts, environments, demographics, devices, and lighting conditions.

This warning is especially important for body-based recognition, silhouette-based recognition, gait-like recognition, and clothing-based recognition. Those methods are particularly vulnerable to error because they may be affected by ordinary and frequent changes such as:

Accordingly, body-based or clothing-based recognition must be treated as a high-risk indicator only, never as conclusive proof of identity. In many cases it may be less reliable than users assume and more legally dangerous than users expect.

Facial recognition also carries material risk. A facial match score, alert, shortlist, or similarity output does not establish identity as a matter of fact or law. It is not evidence of guilt, wrongdoing, intent, trespass, fraud, or dangerousness. Human review does not magically cure a bad system, but the absence of meaningful human review makes things worse.

Véspera must not be used as the sole basis for any decision that could significantly affect a person, including:

Any such action requires an independently lawful basis, documented procedures, and competent human assessment. Even then, many deployments will still be unlawful or indefensible.

As a default rule, Véspera is not intended, marketed, licensed, or authorized for:

If a user nevertheless deploys the Software for any of the above, that deployment is undertaken solely at the user's risk and responsibility and may be unlawful even if technically feasible.

In many jurisdictions, cameras may be used in lower-risk scenarios where people are not identifiable. A common example is a tourism, landscape, coastline, city-view, mountain-view, or weather camera in which any passing people appear only as incidental, distant, low-resolution, non-identifiable figures. If no natural person can reasonably be identified directly or indirectly, data protection law may not apply in the same way, or may not apply at all. That depends on the real-world identifiability of the people shown, not on wishful thinking or labels.

Safer deployments generally include:

Once a system moves from anonymous scenery to identifiable persons, the legal risk profile changes abruptly.

Facial recognition generally involves biometric data or biometric processing when used to uniquely identify a natural person. That usually triggers stricter legal rules. Body-based, silhouette-based, gait-like, and clothing-based recognition may not fall within the strict statutory definition of biometric data in every jurisdiction, but they may still constitute personal data processing, behavioral tracking, profiling, or unlawful surveillance if they enable singling out, linking, re-identification, or repeated observation of an identifiable person.

The user is solely responsible for the way Véspera is configured, integrated, deployed, connected, trained, monitored, and used. The user is also solely responsible for determining whether a deployment is lawful in each jurisdiction and for ensuring compliance with all applicable laws, regulations, permits, notices, consents, contracts, policies, labor requirements, evidentiary standards, and authority instructions.

Without limitation, the user is responsible for:

The provider of Véspera does not assume responsibility for unlawful, excessive, deceptive, covert, discriminatory, or disproportionate use by any user or third party.

Across the European Union, the main baseline is not a "directive" but the General Data Protection Regulation, or GDPR. For law-enforcement processing by competent authorities, the relevant EU instrument is the Law Enforcement Directive. For AI-specific restrictions, the EU AI Act now also matters.

Under the GDPR, if a person can be identified directly or indirectly from video, images, metadata, or linked context, the processing is subject to data protection law. Biometric data used for the purpose of uniquely identifying a natural person is treated as a special category of personal data and is subject to stricter conditions. Video surveillance must have a specified purpose, a valid legal basis, necessity, proportionality, transparency, minimization, security, and limited retention. Large-scale monitoring of publicly accessible areas, or large-scale processing of sensitive data, may require a formal impact assessment.

If individuals are not identifiable directly or indirectly, EU data protection law may not apply in the same way. That is the narrow lane in which scenic tourism cameras, wide landscape feeds, and similar deployments may sometimes operate. But if zoom, resolution, cross-referencing, watchlists, metadata, or context can identify a person, treat the deployment as regulated personal-data processing.

Consent from a few enrolled persons does not automatically legalize facial recognition in a place where other people are also captured. If bystanders or other guests are being scanned in order to detect a specific person, they also matter. That point is often ignored in product pitches and often painful in real enforcement.

The EU AI Act adds another layer. Certain prohibited AI practices have applied since February 2, 2025. The Act is fully applicable from August 2, 2026, with staggered implementation for some categories. Real-time remote biometric identification in publicly accessible spaces for law enforcement is subject to very narrow and exceptional conditions, including authorization requirements. Outside those narrow scenarios, a deployment may still be unlawful under the GDPR, national law, or both. In short: "AI" is not a compliance shortcut; it is usually the opposite.

France: France applies the GDPR together with the French data protection framework under the CNIL's supervision. CNIL has repeatedly stressed that facial recognition is privacy-intrusive, probabilistic rather than certain, and carries serious risks. France has also publicly emphasized that certain "augmented camera" deployments must not use facial recognition.

Germany: Germany applies the GDPR together with national rules and guidance under the German data protection framework. German authorities have emphasized necessity, proportionality, and restrictions on video surveillance by non-public bodies, including CCTV, neighborhood cameras, and similar systems. Germany is not a place where "security" automatically wins the argument.

Spain: Spain applies the GDPR together with Organic Law 3/2018 and AEPD guidance and decisions. Spanish guidance treats facial recognition in video surveillance as special-category processing, and the AEPD has taken a restrictive position toward biometric uses lacking a sufficiently specific legal basis.

Italy: Italy applies the GDPR together with the Italian Privacy Code and Garante enforcement. The Garante has made clear that general data protection rules apply to video surveillance and has acted against municipal facial recognition deployments.

Portugal: Portugal applies the GDPR together with Law 58/2019 and sector-specific rules, including private security and labor rules where relevant. CNPD guidance makes clear that installing or renewing video surveillance systems may trigger multiple overlapping legal requirements.

Netherlands: The Dutch DPA states that organizational use of facial recognition is almost always prohibited and that additional prior engagement with the authority may be required in some cases.

Belgium: Belgian guidance stresses that biometric data is strongly protected under Article 9 GDPR and generally prohibited unless a specific exception applies. Belgium has also issued recommendations specifically addressing biometric processing.

Ireland: Irish guidance states that CCTV footage containing identifiable individuals is personal data, that excessive monitoring can be unlawful, and that organizations should maintain a clear CCTV data protection policy.

Sweden: Swedish guidance states that facial recognition processes biometric data and is subject to special GDPR rules. Sweden also changed its camera-surveillance rules in 2025 so that the permit system shifted, but that did not create a free-for-all; organizations must still perform and document the required legal balancing and compliance analysis.

Denmark: Danish guidance confirms that images of recognizable persons are personal data and that Danish data protection legislation remains applicable alongside the GDPR.

Practical EU conclusion: For ordinary commercial users, Véspera should not be deployed for facial, body, or clothing-based identification or tracking of people in public or semi-public spaces unless specialized counsel confirms a lawful basis and the deployment is narrowly designed, documented, and defensible. For many business cases, that confirmation will not be easy to obtain.

The United States does not have one single comprehensive federal data protection law equivalent to the GDPR. Instead, it has a patchwork of federal, state, sector-specific, municipal, consumer-protection, employment, wiretap, and biometric laws. That patchwork is not simpler. It is just messier.

At the federal level, the Federal Trade Commission has warned about misuse of biometric information and facial recognition, including privacy, security, bias, and discrimination concerns. The FTC has also brought enforcement actions where facial recognition systems were deployed without reasonable safeguards, including a case in which a retailer was prohibited from using AI facial recognition for surveillance purposes for years after alleged failures that harmed consumers.

This means that in the United States, a deployment can create liability even without a GDPR-style national privacy code, particularly if the company made misleading claims, failed to secure data, ignored known inaccuracies, used the system unfairly, or caused foreseeable harm.

Some states impose specific rules on biometric identifiers or biometric data.

Illinois: Illinois' Biometric Information Privacy Act is among the strictest. It regulates biometric identifiers and biometric information used to identify individuals and is famous for litigation risk.

Texas: Texas regulates the capture or use of biometric identifiers for commercial purposes and also now has a broader privacy framework.

Washington: Washington restricts enrolling biometric identifiers in a database for commercial purposes without notice, consent, or an alternative mechanism to prevent later commercial use.

These statutes matter because facial recognition can expose a deployer to litigation, regulatory action, or both.

A growing number of states now have broader privacy statutes that regulate personal data and, often, sensitive data. Major examples include California, Virginia, Colorado, Connecticut, Texas, Oregon, New Jersey, and Delaware. These laws typically require some combination of notice, transparency, consumer rights, purpose limitation, contracts, security measures, and heightened obligations for sensitive data.

California is especially important because the CCPA/CPRA framework gives consumers rights over personal information and sensitive personal information. Colorado, Virginia, Connecticut, Texas, Oregon, New Jersey, and Delaware also have operative privacy laws with different thresholds, definitions, exemptions, and enforcement structures.

Some U.S. cities impose stricter rules. Portland, Oregon, for example, prohibits private entities from using face recognition technologies in places of public accommodation, subject to limited exceptions.

Practical U.S. conclusion: In the United States, it is unsafe to assume that facial recognition or person-tracking is lawful merely because it is technically possible or because there is no single federal privacy code. The legal question depends on the state, the city, the purpose, the setting, the data type, the notice model, the retention model, the promises made to users, and the real-world risk of misidentification or discrimination.

Brazil has a general data protection law, the LGPD. Under the LGPD, biometric data linked to a natural person is sensitive personal data, which is subject to stricter rules. Brazil therefore cannot be treated as a permissive environment for facial recognition just because the market sometimes behaves as though regulation were optional. It is not.

The Brazilian National Data Protection Authority, or ANPD, has publicly identified biometrics and facial recognition as a priority and high-risk area, citing privacy risks, discriminatory effects, and harms from system errors. ANPD has also opened public consultation and regulatory work specifically focused on biometric data.

ANPD has further stated that facial recognition for identity verification is not automatically prohibited under the LGPD, but it involves sensitive personal data and heightened risk. That means legal basis, necessity, transparency, security, governance, and impact assessment discipline matter greatly. The situation is even more sensitive where children and adolescents may be affected.

Brazil also has legal complexities around public-security, criminal-investigation, and related exceptions. Those exceptions do not provide a blanket commercial safe harbor for ordinary private-sector surveillance. Private deployers should not assume that invoking "security" ends the analysis. It does not. ANPD has already scrutinized facial-recognition deployments in high-traffic settings such as football stadium access and highlighted serious compliance concerns, including treatment of sensitive data and children's data.

Practical Brazil conclusion: In Brazil, Véspera should be treated as a potentially high-risk data processing system whenever it can identify, verify, single out, or persistently track a person. Scenic, environmental, and tourism uses where persons are not identifiable are generally lower risk. Person identification or watchlist-style deployment is a different legal animal altogether.

For ordinary commercial deployments, the safest default position is:

By using, integrating, reselling, deploying, operating, or configuring Véspera, the user acknowledges that:

If there is doubt, do not activate the identifying function until qualified counsel and the relevant authorities, where appropriate, have been consulted.

End of Notice.

This feature exists because the legal risk is real, not because a red button makes the problem disappear.